I was shown the "Unlimited Detail" videos when I started talking to people about my dissertation and it has recently

appeared again. This provoked me into considering how the technology might work (assuming it is real) and by altering my compression slightly, I think it could work.

I am assuming that "Unlimited Detail" is not volumetric because of the scanning techniques used to created the data and it has been stated that it works using "Point Cloud Data" aka Voxels.

Using this as a starting point, I considered how this would be represented using my compression. I decided it would essentially consist of a length of empty space, followed by a color, followed by a length of empty space, followed by a color etc.

If a color is 4 bytes and a length is 4 bytes, assuming a single line along the X axis contains an average of 2 points (front and back), a single 1024 x 1024 XY Slice would only require 2 x (4 + 4) x 1024 = 16384 bytes or 16 kilobytes. Which means a 1024 x 1024 x 1024 volume would need 16 megabytes. While this is a bit blotted, it could be considerably reduced using my frequency compression. I haven't actually mentioned much about frequency compression in this blog before, so I'll explain it here.

Frequency compression is my way of compressing run-length data. It consists of an array of bytes, where each byte encodes a 6 bit count and a 2 bit type. The type specifies the frequency of a run of run-lengths and the count defines how many run-lengths are associated with it. The frequencies are thus:

Low - lengths above 65535, 4 bytes

Mid - lengths below 65536 and above 255, 2 bytes

High -lengths below 256, 1 byte

Ultra High - Single item (not run-length encoded), 0 bytes.

Using this method, color points can be stored with only 5 or 4 bytes (1 byte frequency and 4 or 3 byte color) and empty space can be stored with 1, 2 or 4 bytes.

This would mean that a best cast scenario would only need about 1 megabyte per 1024 x 1024 x 1024 block in a model.

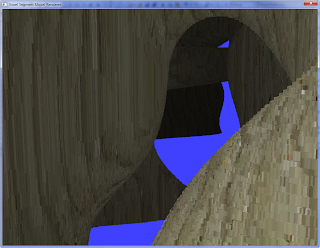

In order to render this would only require ray-point intersection, where the X and Y math would be the same for each vertical slice of the model, leaving only the Z to be checked. Which could potentially run in realtime.

In my opinion, these three are the best use of voxels in computer games which exist today. Of course, that isn't saying much, when you consider that two of them don't even do it properly.

In my opinion, these three are the best use of voxels in computer games which exist today. Of course, that isn't saying much, when you consider that two of them don't even do it properly.